Report

This pipeline provides reports on events/datapoints selected by you based on your requirement. Before configuring this pipeline, if you haven’t already set up Adjust’s CSV uploads you must first set it up. Use your Adjust dashboard to select the events and define the data points you want to send. Once you are set up, you will receive hourly CSV uploads to your storage bucket. You can read more about this endpoint and the procedure to set up Raw Data Export on the Adjust documentation page here

Configuring the Credentials

Select the account credentials which have access to relevant Adjust Raw Data Export data from the dropdown menu & Click Next

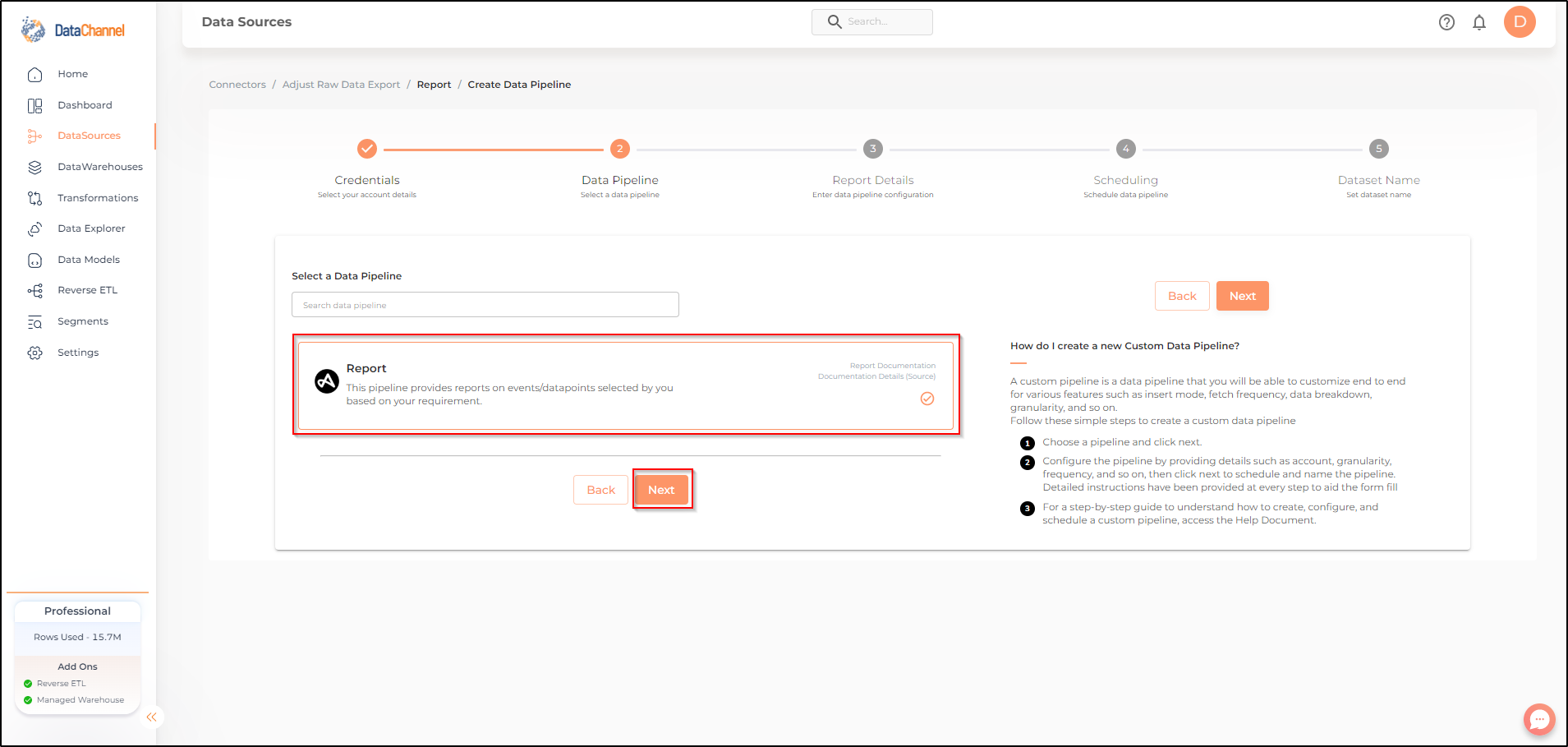

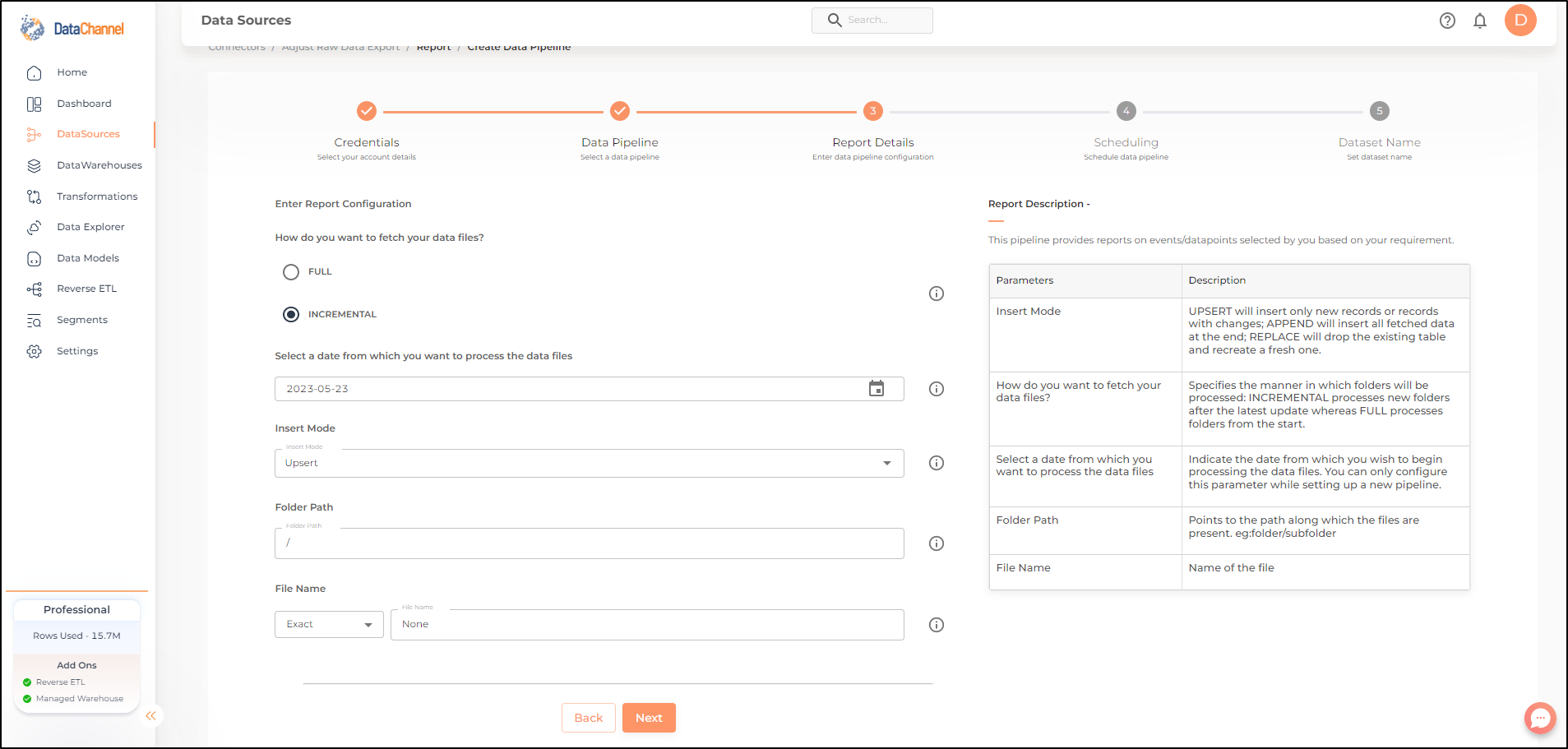

Setting Parameters

Enter the configuration for this pipeline in the screen that shows up. Detailed description for each parameter is given below.

| Parameter | Description | Values |

|---|---|---|

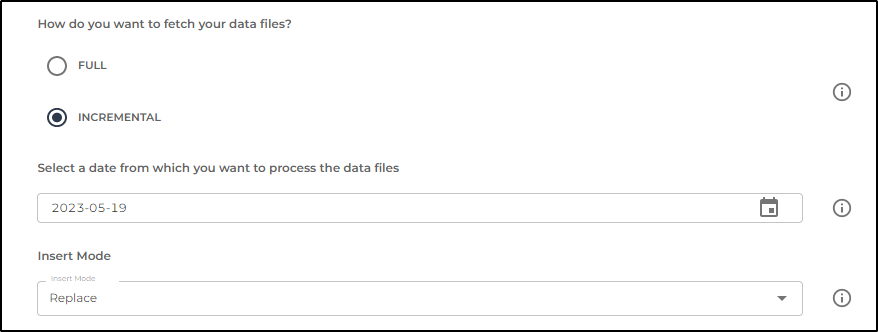

Fetch Mode |

Optional Specifies the manner in which data will be fetched from the Adjust Raw Data Export API : FULL will fetch the entire data in each run. INCREMENTAL will only fetch the new records after the latest update. It is recommended that the pipeline be executed in incremental mode as data lockers typically contain a large amount of data. |

{Full, Incremental} Default Value: INCREMENTAL |

Select a date from which you want to process the data files |

Optional Indicate the date from which you wish to begin processing the data files. You can only configure this parameter once while setting up a new pipeline. This parameter cannot be changed once the pipeline is configured. |

Specify the Start Date |

Insert Mode |

Required This refers to the manner in which data will get updated in the data warehouse, with 'Upsert' selected, the data will be upserted (only new records or records with changes. Data will be upserted on the field '_datachannel_timestamp'). With 'Append' selected, all data fetched will be inserted. Selecting 'Replace' will ensure the table is dropped and recreated with fresh data on each run. It is recommended to use 'Upsert' option unless there is a specific requirement. |

{Upsert, Append, Replace} Default Value: UPSERT |

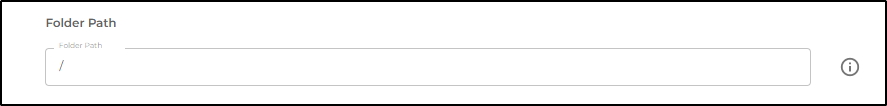

- Folder Path

-

Input the Folder Path in the bucket. For Eg: gs://your-bucket/abc/

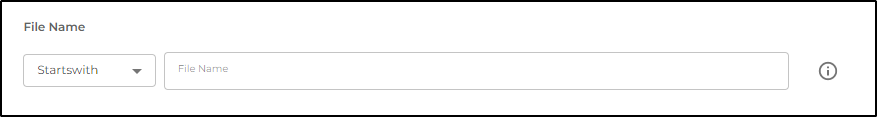

- File

-

You may enter the exact file name or a part of the file name. For Eg: If the filename is abc_def_filename.csv, you can select 'starts with' from the dropdown and enter 'abc'.

Datapipeline Scheduling

Scheduling specifies the frequency with which data will get updated in the data warehouse. You can choose between Manual Run, Normal Scheduling or Advance Scheduling.

- Manual Run

-

If scheduling is not required, you can use the toggle to run the pipeline manually.

- Normal Scheduling

-

Use the dropdown to select an interval-based hourly, monthly, weekly, or daily frequency.

- Advance Scheduling

-

Set schedules fine-grained at the level of Months, Days, Hours, and Minutes.

Detailed explanation on scheduling of pipelines can be found here

Dataset & Name

- Dataset Name

-

Key in the Dataset Name(also serves as the table name in your data warehouse).Keep in mind, that the name should be unique across the account and the data source. Special characters (except underscore _) and blank spaces are not allowed. It is best to follow a consistent naming scheme for future search to locate the tables.

- Dataset Description

-

Enter a short description (optional) describing the dataset being fetched by this particular pipeline.

- Notifications

-

Choose the events for which you’d like to be notified: whether "ERROR ONLY" or "ERROR AND SUCCESS".

Once you have finished click on Finish to save it. Read more about naming and saving your pipelines including the option to save them as templates here

Still have Questions?

We’ll be happy to help you with any questions you might have! Send us an email at info@datachannel.co.

Subscribe to our Newsletter for latest updates at DataChannel.